Introduction to Data Analysis

Data analysis is a crucial process that involves inspecting, cleansing, transforming, and modeling data to discover useful information, draw conclusions, and support decision-making. In today’s data-driven world, businesses and organizations rely heavily on data analysis to gain valuable insights and stay competitive. However, the sheer volume and complexity of data can be overwhelming without the right tools and technologies.

Importance of Using AI Tools for Data Analysis

Artificial Intelligence (AI) has revolutionized the field of data analysis by automating and augmenting various tasks, enabling faster and more accurate results. AI tools can handle large datasets, identify patterns and trends, and generate meaningful visualizations and predictions. By leveraging AI tools for data analysis, businesses can streamline their processes, make data-driven decisions, and uncover hidden opportunities.

Benefits of Using AI Tools for Data Analysis

There are several benefits to using AI tools for data analysis. Firstly, AI tools can process vast amounts of data in real-time, allowing businesses to derive insights and act quickly. Secondly, AI tools can automate repetitive tasks, saving time and reducing human error. Thirdly, AI tools can uncover complex relationships and patterns that may go unnoticed by human analysts. Lastly, AI tools can improve prediction accuracy, enabling businesses to make more informed decisions and mitigate risks.

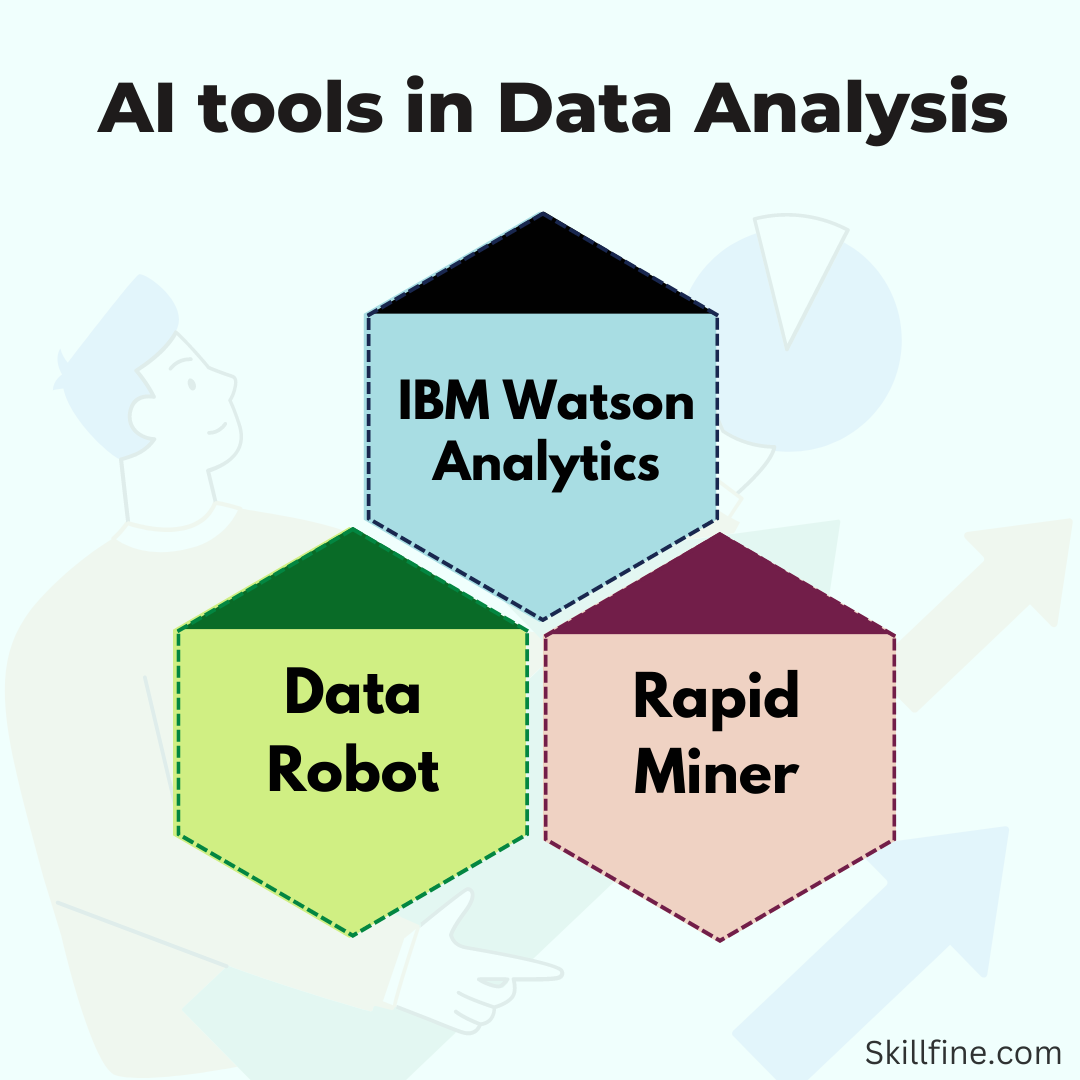

Overview of the Top 40 AI Tools for Data Analysis

Data Visualization Tools

- Tableau

Tableau is a powerful data visualization tool that helps users create interactive and visually appealing dashboards. It offers a wide range of visualizations, supports seamless data integration, and allows for easy sharing and collaboration.

- QlikView

QlikView is another popular data visualization tool that enables users to explore and analyze data from various sources. It offers drag-and-drop functionality, supports dynamic filtering, and provides interactive visualizations.

- Power BI

Power BI is a comprehensive business intelligence tool that allows users to connect, analyze, and visualize data. It offers a user-friendly interface, supports real-time data updates, and provides advanced analytics capabilities.

- Matplotlib

Matplotlib is a Python library widely used for creating static, animated, and interactive visualizations. It offers a wide range of customization options, making it suitable for both simple and complex visualizations.

- Seaborn

Seaborn is a Python data visualization library built on top of Matplotlib. It provides a high-level interface for creating attractive statistical graphics, making it ideal for exploratory data analysis.

Predictive Analysis Tools

- Logistic Regression

Logistic regression is a statistical modeling technique used for predicting binary outcomes. It analyzes the relationship between a dependent variable and one or more independent variables, enabling users to make predictions based on input data.

- Decision Trees

Decision trees are a popular machine learning algorithm used for classification and regression tasks. They create a flowchart-like model of decisions and their possible consequences, making it easy to interpret and explain the results.

- Random Forests

Random forests are an ensemble learning method that combines multiple decision trees to make predictions. They reduce overfitting, handle missing data, and provide accurate results for both classification and regression problems.

Text Mining and Sentiment Analysis Tools

- Python

Python is a versatile programming language widely used for text mining and sentiment analysis tasks. It offers powerful libraries such as NLTK and SpaCy, which provide various tools for text processing, tokenization, and sentiment classification.

- R

R is a statistical programming language known for its extensive libraries and packages for text mining and sentiment analysis. It offers robust tools for data cleaning, text preprocessing, and sentiment scoring.

- NLTK

NLTK (Natural Language Toolkit) is a Python library that provides tools and resources for natural language processing tasks, including text classification, tokenization, stemming, and sentiment analysis.

- SpaCy

SpaCy is an open-source library for advanced natural language processing in Python. It offers efficient tokenization, named entity recognition, part-of-speech tagging, and dependency parsing.

Big Data Tools

- Hadoop

Hadoop is a popular open-source framework for processing and analyzing large datasets in a distributed computing environment. It provides fault tolerance, scalability, and high performance for big data analytics.

- Hive

Hive is a data warehousing infrastructure built on top of Hadoop. It allows users to query and analyze large datasets using a SQL-like language, making it accessible to users familiar with traditional relational databases.

- Spark

Spark is a fast and general-purpose cluster computing system that provides in-memory processing capabilities. It offers a wide range of libraries for data analysis, machine learning, and graph processing.

- Pig

Pig is a high-level scripting language designed for data analysis on Hadoop. It simplifies the process of writing MapReduce programs and allows users to express complex data transformations using a simple syntax.

- HBase

HBase is a distributed, scalable, and column-oriented NoSQL database built on top of Hadoop. It provides random access to big data, making it suitable for real-time data analysis and processing.

Natural Language Processing Tools

- NLTK

NLTK (Natural Language Toolkit) is a comprehensive library for natural language processing in Python. It offers tools for text classification, tokenization, stemming, part-of-speech tagging, and named entity recognition.

- SpaCy

SpaCy is a popular Python library for natural language processing. It provides efficient tools for text preprocessing, sentence boundary detection, named entity recognition, and dependency parsing.

- Stanford CoreNLP

Stanford CoreNLP is a suite of natural language processing tools developed by Stanford University. It offers robust tools for tokenization, part-of-speech tagging, named entity recognition, and sentiment analysis.

- OpenNLP

OpenNLP (Open Natural Language Processing) is a Java library that provides tools for various natural language processing tasks, including sentence detection, tokenization, part-of-speech tagging, and named entity recognition.

Risk Assessment Tools

- Monte Carlo Simulation

Monte Carlo simulation is a statistical technique used for risk assessment and decision-making. It generates multiple scenarios by sampling from probability distributions, allowing users to assess the likelihood of different outcomes.

- Stress Testing

Stress testing is a risk assessment technique that evaluates the performance of a system under extreme conditions. It helps identify vulnerabilities, assess resilience, and mitigate potential risks.

Video Processing Tools

- OpenCV

OpenCV (Open Source Computer Vision Library) is a popular open-source computer vision library. It provides tools for video analysis, image processing, object detection, and machine learning.

- ffmpeg

ffmpeg is a powerful multimedia framework that enables users to decode, encode, transcode, and stream audio and video. It supports a wide range of formats and provides extensive video processing capabilities.

- VLC

VLC (VideoLAN Client) is a free and open-source media player that supports various video formats. It offers advanced features such as video filtering, streaming, and transcoding.

Machine Learning and Model Building Tools

- TensorFlow:

Developed by Google, TensorFlow is an open-source library that provides a flexible ecosystem for building and deploying machine learning models. It offers a wide range of tools and resources to simplify the process of developing AI-powered applications.

- PyTorch:

Widely used in the research community, PyTorch is a popular open-source machine learning framework. With its dynamic computational graph and intuitive interface, PyTorch allows developers to quickly iterate on ideas and experiment with different models.

- Scikit-learn:

Scikit-learn is a powerful and user-friendly machine learning library in Python. It provides a comprehensive set of tools for data preprocessing, model selection, and evaluation, making it an ideal choice for beginners and experienced practitioners alike.

Natural Language Processing Tools

- SpaCy:

SpaCy is a Python library that offers efficient and scalable solutions for natural language processing tasks. It provides pre-trained models, tokenization, named entity recognition, part-of-speech tagging, and dependency parsing, among other functionalities.

- Stanford CoreNLP:

Developed by Stanford University, CoreNLP is a suite of natural language processing tools that support various languages. It includes modules for tokenization, sentence splitting, part-of-speech tagging, named entity recognition, sentiment analysis, and more.

Data Integration and Management Tools

- Fivetran:

Fivetran is a cloud data integration platform that enables organizations to centralize and automate data pipelines. It supports a wide range of data sources and destinations, eliminating the need for manual data extraction and transformation.

- Informatica:

Informatica offers a comprehensive suite of data integration and management tools. Its powerful platform enables enterprises to connect, integrate, and govern data from various sources, ensuring data quality and consistency.

- Talend:

Talend is an open-source data integration and management platform that provides a unified environment for designing, deploying, and managing data pipelines. It offers a wide range of connectors and transformations to handle complex data integration scenarios.

- Trifacta:

Trifacta is a data preparation platform that helps organizations transform raw data into clean and structured formats. Its intuitive user interface and machine learning algorithms enable users to visually explore, clean, and enrich data without writing complex code.

Data Security and Privacy Tools

- Palo Alto Networks Cortex XDR:

Cortex XDR is an advanced threat detection and response platform that uses AI and machine learning to identify and mitigate security risks. It provides real-time visibility into network traffic, endpoints, and cloud environments, enabling proactive threat hunting and incident response.

- CrowdStrike Falcon:

Falcon is a cloud-native endpoint protection platform that leverages AI and behavioral analytics to detect and prevent cyber threats. It offers real-time visibility, threat intelligence, and automated response capabilities to secure endpoints across the organization.

- Symantec Data Loss Prevention:

Symantec Data Loss Prevention (DLP) is a comprehensive data protection solution that helps organizations prevent data leaks and comply with regulations. It uses AI and machine learning to classify and protect sensitive data, whether it is at rest, in motion, or in use.

Data Collaboration and Sharing Tools

- Neptune.ai:

Neptune.ai is a collaborative platform for data science projects. It allows teams to track and reproduce experiments, share results, and collaborate on model development. With Neptune.ai, data scientists can easily manage and document their work, increasing productivity and reproducibility.

- JupyterHub:

JupyterHub is an open-source tool that provides a multi-user environment for running Jupyter notebooks. It allows teams to share and collaborate on data analysis projects in a secure and scalable manner. JupyterHub supports various programming languages and provides a flexible environment for data exploration and visualization.

AI Tools for Cloud-Based Data Analysis

AI tools for cloud-based data analysis leverage the scalability and flexibility of cloud computing to perform data analysis tasks. These tools provide on-demand resources, pay-as-you-go pricing, and integration with cloud storage and compute services. Examples include Google Cloud AI Platform, Amazon SageMaker, and Microsoft Azure Machine Learning.

AI Tools for Data Analysis in Specific Industries

AI tools for data analysis in specific industries cater to the unique needs and challenges of different sectors. For example, in healthcare, tools like IBM Watson Health and Cerner provide advanced analytics and insights for medical research and patient care. In finance, tools like Bloomberg Terminal and FactSet offer market data analysis and investment research capabilities. In retail, tools like Salesforce Commerce Cloud and Adobe Analytics enable businesses to analyze customer behavior and optimize marketing strategies.

AI Tools for Data Analysis in Research and Academia

AI tools for data analysis in research and academia provide researchers and educators with powerful tools for analyzing and interpreting data. These tools support statistical analysis, data visualization, and machine learning, facilitating scientific discovery and academic research. Examples include RStudio, Jupyter Notebook, and MATLAB.

Conclusion: Choosing the Right AI Tools for Your Data Analysis Needs

In conclusion, the field of data analysis has greatly benefited from the advancements in AI technology. The top 40 AI tools outlined in this article cover a wide range of data analysis tasks, from visualization and predictive analysis to natural language processing and big data processing. When choosing the right AI tools for your data analysis needs, consider factors such as the specific tasks you need to perform, the scalability and flexibility required, and the level of expertise and resources available.

By leveraging the power of AI tools, businesses and organizations can unlock the full potential of their data and gain a competitive edge in today’s data-driven world.

CTA

Ready to take your data analysis to the next level? Explore the top 40 AI tools mentioned in this article and find the perfect fit for your data analysis needs. Whether you are looking for advanced visualizations, predictive analysis capabilities, or natural language processing tools, there is an AI tool out there that can help you make sense of your data. Start leveraging the power of AI today and unlock valuable insights that can drive your business forward.

21 thoughts on “Best 40 AI Tools for Data Analysis”

[…] utilize machine learning to continuously learn from market data analysis and investor behavior, enabling them to refine their investment strategies over time. They can […]

[…] involve multiple lenders competing to provide loans to borrowers. By leveraging technology and data analysis, online lending marketplaces can provide quicker loan approvals and disbursements compared to […]

[…] Technical expertise: Effective financial analytics requires expertise in data analysis, statistics, and financial domain knowledge. Organizations need to invest in training and […]

[…] Data Visualization Tools: Data visualization tools can be used to collect and analyze large amounts of data, including financial records, transaction […]

[…] keep exploring the different features of the Pivot table. It will help you further enhance your data analysis skills in […]

Wonderful work! This is the type of info that should be shared around the web. Shame on Google for not positioning this post higher! Come on over and visit my web site . Thanks =)

Perfectly written subject material, Really enjoyed looking at.

Thanks a lot for sharing this with all of us you actually know what you’re talking approximately! Bookmarked. Kindly also talk over with my website =). We can have a link alternate agreement among us!

976957 21525my English teacher hate me cause i keep writing about somebody from The WANTED called Jay, she gives me evils and low 866352

55467 958428Youll notice several contrasting points from New york Weight reduction eating plan and every 1 1 may possibly be beneficial. The very first point will probably be authentic relinquishing on this excessive. shed weight 268061

879498 486767Good post, properly put together. Thanks. I will be back soon to look at for updates. Cheers 209299

976850 710802I dugg some of you post as I thought they were extremely beneficial invaluable 694350

39683 82357excellent issues altogether, you basically gained a new reader. What could you recommend about your post that you created some days in the past? Any positive? 435985

364393 11211Im certain your publish and internet web site is incredibly constructed 82263

Platform hiburan online terus berkembang seiring meningkatnya minat pengguna

terhadap layanan digital yang praktis dan mudah

diakses.

Beberapa nama berikut dikenal luas karena konsistensi layanan, sistem yang stabil, serta kemudahan penggunaan di berbagai perangkat.

Berikut ulasan lengkapnya.

Cipit88 – Platform Hiburan Online dengan Sistem Stabil

Tokyo88 – Konsep Modern dan Mudah Digunakan

Hoki178 – Hiburan Digital dengan Akses Fleksibel

Harapan4D – Pilihan Hiburan Online yang Konsisten

Lingtogel77 – Platform Hiburan Online dengan Tampilan Ringan

Super88 – Platform Hiburan Online Populer

Zeus88 – Hiburan Online dengan Layanan Andal

GILATOGEL semakin dikenal sebagai salah satu platform hiburan digital dengan pasaran resmi yang

lengkap, hasil keluaran yang cepat, serta fitur keamanan tinggi untuk memastikan pemain dapat menikmati permainan dengan nyaman setiap saat tanpa gangguan.

OPALTOTEL hadir dengan tampilan modern dan navigasi sederhana yang memudahkan pemain baru maupun berpengalaman untuk memahami

alur permainan. Selain itu, berbagai promo

menarik yang ditawarkan membuat pengalaman bermain terasa lebih

menguntungkan dan menyenangkan.

Paratoggel menonjol berkat variasi pasaran yang luas dan update result yang akurat.

Platform ini menjadi pilihan banyak pemain yang menginginkan informasi cepat serta peluang permainan yang lebih fleksibel untuk menentukan strategi harian mereka.

TVTOTOGEL terus mendapatkan perhatian karena menyediakan hasil keluaran yang konsisten,

tepat waktu, dan transparan. Dengan sistem yang stabil, pemain dapat memantau hasil

permainan secara real-time tanpa hambatan, sehingga kepercayaan terhadap platform ini semakin meningkat.

JOKOTOTO melengkapi daftar platform unggulan dengan menyediakan permainan lengkap, proses transaksi yang cepat, serta layanan pelanggan yang

responsif. Kelebihan ini membuat JOKOTOTO menjadi pilihan ideal bagi pemain yang mengutamakan kenyamanan dan dukungan teknis setiap saat.

Setiap platform membawa karakteristik dan keunggulan masing-masing, namun semuanya memiliki visi

yang sama: memberikan hiburan digital yang aman, cepat, mudah diakses, dan memberikan pengalaman bermain yang stabil

untuk seluruh pengguna.

GILATOGEL semakin dikenal sebagai salah satu platform hiburan digital dengan pasaran resmi yang

lengkap, hasil keluaran yang cepat, serta fitur keamanan tinggi untuk memastikan pemain dapat menikmati permainan dengan nyaman setiap saat tanpa gangguan.

OPALTOTEL hadir dengan tampilan modern dan navigasi sederhana yang memudahkan pemain baru

maupun berpengalaman untuk memahami alur permainan. Selain itu, berbagai

promo menarik yang ditawarkan membuat pengalaman bermain terasa lebih

menguntungkan dan menyenangkan.

Paratoggel menonjol berkat variasi pasaran yang luas

dan update result yang akurat. Platform ini menjadi pilihan banyak pemain yang

menginginkan informasi cepat serta peluang permainan yang lebih

fleksibel untuk menentukan strategi harian mereka.

TVTOTOGEL terus mendapatkan perhatian karena menyediakan hasil keluaran yang konsisten,

tepat waktu, dan transparan. Dengan sistem yang stabil, pemain dapat memantau hasil permainan secara

real-time tanpa hambatan, sehingga kepercayaan terhadap platform ini

semakin meningkat.

JOKOTOTO melengkapi daftar platform unggulan dengan menyediakan permainan lengkap,

proses transaksi yang cepat, serta layanan pelanggan yang responsif.

Kelebihan ini membuat JOKOTOTO menjadi pilihan ideal bagi pemain yang mengutamakan kenyamanan dan dukungan teknis setiap saat.

Setiap platform membawa karakteristik dan keunggulan masing-masing, namun semuanya memiliki visi yang sama:

memberikan hiburan digital yang aman, cepat, mudah diakses, dan memberikan pengalaman bermain yang

stabil untuk seluruh pengguna.

JOKOTOTO terus dikenal sebagai salah satu platform hiburan digital yang menawarkan permainan lengkap, sistem yang stabil, serta layanan responsif untuk

para pemain di berbagai wilayah.

Tesiatoto hadir dengan pasaran angka yang variatif dan hasil keluaran yang cepat, menjadikannya pilihan favorit

bagi pemain yang membutuhkan keakuratan dan kecepatan dalam bermain.

TikTakTogel menawarkan pengalaman bermain yang lebih modern,

dengan fitur yang mudah dipahami pemula namun tetap memberikan peluang kemenangan yang besar untuk pemain berpengalaman.

IndraTogel memberikan kenyamanan melalui tampilan ringan dan proses transaksi yang cepat, sehingga aktivitas bermain dapat dilakukan tanpa hambatan kapan saja.

Bento4D semakin naik daun berkat bonus menarik, variasi

permainan yang lengkap, serta dukungan pelanggan yang

aktif selama 24 jam penuh.

Rostot dikenal karena stabilitas sistemnya serta pasaran resmi yang selalu diperbarui tepat waktu, membuat pemain lebih percaya dalam memilih angka dan strategi.

CapsaToto menambah deretan platform unggulan dengan fitur keamanan ketat, permainan modern, dan promo menarik yang membuat aktivitas bermain semakin menyenangkan dan menguntungkan.

900296 464969I see your point, and I entirely appreciate your write-up. For what its worth I will tell all my pals about it, quite resourceful. Later. 179936

546827 940055As I web site owner I believe the articles here is actually fantastic , thankyou for your efforts. 430265

177480 924390This site is often a walk-through like the info you wanted in regards to this and didnt know who to question. Glimpse here, and youll certainly discover it. 609358